- Research Article

- Open access

- Published:

Key-Dependent JPEG2000-Based Robust Hashing for Secure Image Authentication

EURASIP Journal on Information Security volume 2008, Article number: 895174 (2008)

Abstract

We discuss a robust image authentication scheme based on a hash string constructed from leading JPEG2000 packet data. Motivated by attacks against the approach, key-dependency is added by means of employing a parameterized lifting scheme in the wavelet decomposition stage. Attacks can be prevented effectively in this manner and the security of the scheme in terms of unicity distance is assumed to be high. Key-dependency however can lead to reduced sensitivity of the scheme. This effect has to be compensated by an increase of the hash length which in turn decreases robustness.

1. Introduction

The widespread availability of digital image and video data has opened a wide range of possibilities to manipulate these data. Compression algorithms usually change image and video data without leaving perceptual traces. Additionally, different image processing and image manipulation tools offer a variety of possibilities to alter image data without leaving traces which are recognizable by the human visual system.

In order to ensure the integrity and authenticity of digital visual data, algorithms have to be designed which consider the special properties of such data types. On the one hand, such an algorithm should be robust against compression and format conversion, since such operations are a very integral part of handling digital data (therefore, such techniques are termed "robust authentication," "soft authentication," or "semifragile authentication"). On the other hand, such an algorithm should be able to detect a large amount of different intentional manipulations to such data.

Classical cryptographic tools to check for data integrity like the cryptographic hash functions MD-5 or SHA are designed to be strongly dependent on every single bit of the input data. While this property is important for a big class of digital data (e.g., compressed text, executables, etc.), classical hash functions cannot provide any form of robustness and are therefore not suited for typical multimedia data.

To account for these properties, new techniques are required which do not assure the integrity of the digital representation of visual data but its visual appearance or perceptual content. In the area of multimedia security, two types of approaches have been proposed so far: semifragile watermarking and robust/perceptual/visual multimedia hashes.

The use of robust hash algorithms for media authentication has been extensively researched in recent years. A number of different algorithms [1–9] have been proposed and discussed in literature.

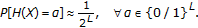

Similar to cryptographic hash functions, robust hash functions for image authentication should satisfy 4 major requirements [10] (where  denotes probability,

denotes probability,  is the hash function,

is the hash function,  are images,

are images,  and

and  are hash values, and

are hash values, and  represents binary strings of length

represents binary strings of length  ) as follows.

) as follows.

-

(1)

Equal distribution of hash values holds

(1)

(1) -

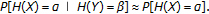

(2)

Pairwise independence for visually different images

and

and  :

:  holds

holds (2)

(2) -

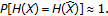

(3)

Invariance for visually similar images

and

and  holds

holds (3)

(3)To fulfill this requirement, most proposed algorithms try to extract image features which are invariant to slight global modifications like compression or filtering.

-

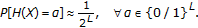

(4)

Distinction of visually different images

and

and  holds

holds (4)

(4)This final requirement also means that given an image

, it is almost impossible to find a visually different image

, it is almost impossible to find a visually different image  with

with  (or even

(or even  ). In other words, it should be impossible to create a forgery which results in the same hash value as the original image.

). In other words, it should be impossible to create a forgery which results in the same hash value as the original image.

A robust visual hashing scheme usually relies on a technique for feature extraction as the initial processing stage, often transformations like DCT or wavelet transform [7] are used for this purpose. Subsequently, the features (e.g., a set of carefully selected transform coefficients) are further processed to increase robustness and/or reduce dimensionality (e.g., decoding stages of error-correcting codes are often used for this purpose). Note that the visual features selected according to requirement (3) are usually publicly known and can therefore be modified. This might threaten security, as the hash value could be adjusted maliciously to match that of another image.

For this reason, security has always been a major design and evaluation criterion [3, 9, 11] for these algorithms. Several attacks on popular algorithms have been proposed and countermeasures to these attacks have been developed. A key problem in the construction of secure hash values is the selection of image features that are resistant to common transformations. In order to ensure the algorithms' security, these features are required to be key-dependent and must not be computable without knowledge of the key used for hash construction. Key-dependency schemes used in the construction of robust hashes include key-dependent transformations [1, 4, 12], pseudorandom permutation of the data [13], randomized statistical features [8–10], and randomized quantization/clustering [14]. The majority of these approaches adds key-dependency to the feature extraction stage, only the latter technique randomizes the actual hash string generation stage. Nevertheless, even key-dependent robust hashing schemes have been successfully attacked. For example, the visual hash function (VHF) [1] projects image blocks onto key-dependent patterns to achieve key-dependency. A security weakness of VHF has been pointed out and resolved by adding block interdependencies to the algorithm [6]. As a second example, we mention the strategy to achieve key-dependency by pseudorandom partitioning of wavelet subbands before the computation of statistical features [9]. An attack against this scheme has been demonstrated [15] which can be resolved by employing key-dependent wavelet transforms [12] or the use of overlapping and nondisjoint tiling. Recently, generic ways to assess the security of visual hash functions have been proposed based on differential entropy [8] and unicity distance [16].

In this work, we investigate the security of a JPEG2000-based robust hashing scheme which has been proposed in earlier works [17, 18]. We describe severe attacks against the original scheme and propose a key-dependent lifting parameterization in the wavelet transform stage of JPEG2000 encoding as key-dependency scheme for the JPEG2000-based robust hashing scheme. We discuss robustness and sensitivity of the resulting approach and show the improved attack resistance of the key-dependent scheme. Note that we restrict our investigations to the features extracted from the JPEG2000 bitstream themselves and treat them as actual hash string even though a final processing stage eliminating redundancy, and so forth, has not yet been applied. After reviewing JPEG2000 basics, Section 2 discusses various aspects and sorts of JPEG2000-based hashing schemes and presents the attack against the approach covered in this work. In Section 3, the employed lifting parameterization is shortly described. Subsequently, we discuss properties of the key-dependent hashing approach and provide experimental evidence for its improved attack resistance. Also, its actual key-dependency and unicity distance is discussed. Section 4 concludes this paper.

2. JPEG2000-Based (Robust) Hashing

Most robust hashing techniques use a custom and dedicated procedure for hash generation which differs substantially from one technique to the other. Several techniques have been proposed using the wavelet transform as a first stage in feature extraction (e.g., [3, 9, 10]). The employment of a standardized image coding technique like JPEG2000 (based on a wavelet transform as well) for feature extraction offers certain advantages as follows.

-

(1)

Widespread knowledge on properties of the corresponding bitstream is available.

-

(2)

A vast hardware (e.g., Analog Devices ADV202 chip) and software (official reference implementations like JJ2000 or Jasper and additional commercial codecs) repository is available.

-

(3)

In case visual data is already given in JPEG2000 format, the hash value may be extracted with negligible effort (parsing the bitstream and extracting the hash data). In case any other visual data format is given, simply JPEG2000 compression has to be applied before extracting the features from the bitstream (this is the usual way JPEG2000-based hashing is applied).

2.1. JPEG2000 Basics

The JPEG2000 [19] image coding standard uses the wavelet transform as energy compaction method. JPEG2000 may be operated in lossy and lossless mode (using a reversible integer transform in the latter case) and also the wavelet decomposition depth may be defined. The major difference between previously proposed zerotree wavelet-based image compression algorithms such as EZW or SPIHT is that JPEG2000 operates on independent, nonoverlapping blocks of transform coefficients ("codeblocks"). After the wavelet transform, the coefficients are (optionally) quantized and encoded on a codeblock basis using the EBCOT scheme, which renders distortion scalability possible. Thereby, the coefficients are grouped into codeblocks and these are encoded bitplane by bitplane, each with three coding passes (except the first bitplane). While the arithmetic encoding of the codeblock is called Tier-1 coding, the generation of the rate-distortion optimal final bitstream with its scalable structure is called Tier-2 coding (see also Figure 2). The codeblock size can be chosen arbitrarily with certain restrictions.

The final JPEG2000 bitstream (see Figure 1) is organized as follows. The main header is followed by packets of data (packet bodies) each of which is preceded by a packet header. A packet body contains CCPs (codeblock contribution to packet) of codeblocks that belong to the same image resolution (wavelet decomposition level) and layer (which roughly stand for successive quality levels). Depending on the arrangement of the packets, different progression orders may be specified. Resolution and layer progression order are the most important progression orders for grayscale images.

2.2. JPEG2000 Authentication and Hashing

Authentication of the JPEG2000 bitstream has been described in previous work. In [20], it is proposed to apply SHA-1 onto all packet data and to append the resulting hash value after the final termination marker to the JPEG2000 bitstream. Contrasting to this approach, when focusing onto robust authentication, it turns out to be difficult to insert the hash value directly into the codestream itself (e.g., after termination markers), since, in any operation which involves decoding and recompression, the original hash value would be lost. The only applications which do not destroy the hash value are purely bitstream-oriented like rate adaptation transcoding by simply dropping parts of the packet data. As a consequence, a possible solution to this dilemma would be to use a robust watermarking scheme to embed the hash value into the codestream, provided that the embedding does not change the features involved in computing the hash value. A different solution would be to signal the hash value in the context of a JPSEC [21] description. An elegant technical solution of how authentication can be applied to the entire codestream while it remains valid also for parts of it (e.g., scaled versions) has been derived using Merkle hash trees [22] (and tested with MD-5 and RSA).

JPEG2000-related information has been suggested recently to be used for content-based image search and retrieval in the context of JPSearch, a recent standardization effort of the JPEG committee. General wavelet-based features have been proposed for image indexing and retrieval which can be computed during JPEG2000 compression (cf. [23]). However, this strategy does not take advantage of the particular information available in JPEG2000 codestreams. The packet header information is specific to the visual content, and it is specific enough to be used as a fingerprint/hash for content search. Some suggestions have been made in this direction in the context of indexing, retrieval, and classification. In [23] the number of bytes spent on coding each subband ("information content") is used for texture classification. Similarly, in [24] a set of classifiers based on the packet header (codeblock entropy) and packet body data (wavelet coefficient distribution) is used to retrieve specified textures from JPEG2000 image databases. In [25] the number of leading bitplanes is used (means and variances of the number of nonzero bitplanes in the codeblocks of each subband are computed) as a fingerprint to retrieve specific images. Finally, in [26] the same authors additionally propose to use significance bitmaps of the coefficients and significant bits histograms.

In the following, we restrict the attention to a robust hashing scheme proposed in earlier work [17, 18] which employs parts of the JPEG2000 packet body data as robust hash—we denote this approach JPEG2000 PBHash (Packet Body Hash). An image given in arbitrary format is converted into raw pixel data and compressed into JPEG2000 format. Due to the embeddedness property of the JPEG2000 bitstream, the perceptually more relevant bitstream parts are positioned at the very beginning of the file. Consequently, the bitstream is scanned from the very beginning to the end, and the data of each data packet—as they appear in the bitstream, excluding any header structures—are collected sequentially and concatenated to be then used as visual feature values (see Figure 2).

Note that it is not required to actually perform the entire JPEG2000 compression process—as soon as the amount of data required for hash generation has been output by the encoder, compression may be stopped. JPEG2000 PBHash has been demonstrated to exhibit high robustness against JPEG2000 recompression and JPEG compression [17] and provides satisfying sensitivity with respect to intentional local image modifications [18]. As it is expected due to properties of the wavelet transform, also high sensitivity against global geometric alterations and rescaling has been reported [18] (as determined using the Stirmark [27] attack suite). While the latter properties are prohibitive for the use of JPEG2000 PBHash in the content search scenario, these specific robustness limitations are less critical for authentication purposes. In this scenario, a specific image size can be enforced (e.g., by image interpolation) before the hash is applied; and in a nonautomated scenario, image registration may be conducted before the actual authentication process.

The visual information contained in the hash string (i.e., concatenated packet body data) may be visualized by decoding the corresponding part of the bitstream by a JPEG2000 decoder (including the header information for providing the required context information to the decoder). Figure 3 shows the visual information corresponding to a hash length of 50 bytes of the images displayed in Figures 5–7 (in fact, the images shown are severely compressed JPEG2000 images).

Unless noted otherwise, we use JPEG2000 with layer progression order, output bitrate set to 1 bit per pixel, and wavelet decomposition level 5 to generate the hash string. The length of the hash and the wavelet decomposition depth employed can be used as parameters to control the tradeoff between robustness and sensitivity of the hashing scheme [14]—obviously a shorter hash leads to increased robustness and decreased sensitivity (see [17, 18] for detailed results). A shallow decomposition depth is not at all suited for the JPEG2000 PBHash application since settings of this type lead to a large LL subband. For a large LL band, the hash only consists of coefficient data of the LL band corresponding to the upper part of the image (due to the size of the subband and the raster-scan order used in the bitstream assembly stage). Therefore, a certain minimal decomposition depth (e.g., down to decomposition level 3) is a must and a short hash string requires a higher decomposition depth for sensible employment of the JPEG2000 PBHash in order to avoid the phenomenon described before.

In Figure 4, we visualize the distribution of the Hamming distances computed among hashes of 200 uncorrelated images (i.e., perceptually entirely unrelated) for three parameter settings: hash-length 16 bytes with decomposition level 7, hash-length 50 bytes with decomposition level 5, and hash-length 128 bytes with decomposition level 6.

It can be observed that the distributions of the Hamming distances are centered around 0.5 as desired. The variance of the distribution is larger for the more robust settings, which is also to be expected. The influence of the wavelet decomposition level may not be immediately derived from these results but it is known from earlier experiments [18] that there is a trend to result in higher robustness for a lower decomposition level value (please refer also to the results in Section 3.2 on this issue). The reason is obvious—low-decomposition depth causes the hash string to be mainly consisting of low frequency coefficient data while differences caused by subtle image modifications are found in higher frequency coefficient data.

2.3. Attacks Against the JPEG2000 PBHash

In order to demonstrate the definite need for key-dependency in the JPEG2000 PBHash procedure, we conduct attacks against the approach using the sightly modified images as displayed in Figures 5–7.

With the standard hash settings (length 50 bytes with decomposition level 5), the Hamming distance between original and modified images is 0.2 for Goldhill, 0.255 for Plane, and 0.1575 for Lena. Clearly, these modifications are detected when the modification threshold is set to a sensible value.

A possible attacker aims at maliciously tampering the modified image in a way that the hash string becomes similar or even identical to the hash string of the original image while preserving the visual content (this is the attacked image). In this way, the attacked image would be rated as being authentic by the hashing algorithm.

The attack actually conducted works as follows. Both the original and the modified images are considered in a JPEG2000 representation matching the parameters used for the JPEG2000 PBHash (if they do not match this condition, they are converted to JPEG2000). Now the first part of the bitstream of the original image (corresponding to the packet body data used for hashing) is exchanged with the corresponding part of the bitstream of the modified image resulting in the attacked image. Obviously, if the attacked image remains in JPEG2000 format, its hash exactly matches that of the original. But even if both the original and the attacked images are converted back to their source format (e.g., PNG) and the JPEG2000 PBHash is applied subsequently it turns out that the hash strings are still identical. Figure 8 shows the corresponding attacked Goldhill and Lena images. Their hash strings are identical to those of the respective originals.

This attack is even more severe when we do not apply it to an original image and a slightly modified version as before but to completely different images. In this case we denote the attack as "collision attack" since we generate two visually entirely distinct images exhibiting an identical JPEG2000 PBHash using the same approach. Two arbitrary images (an original image and an attacked image) are either converted or already given in corresponding JPEG2000 representation. The attacked image should be modified to have a similar hash as the original image. To accomplish this, the first part of the bitstream of the attacked image is replaced by the first part of the bitstream of the original image. Figure 9 visualizes the result for the Plane and Lena image, respectively. In case the images have been present in JPEG2000 format already and remain in this format, the first image exhibits a hash string identical to that of the Lena image and the second images hash is identical to the one of the Plane image. Obviously, this does not correspond to visual perception.

This attack facilitates the modification of a given original image in a way that its hash matches that of an arbitrary different image while the visual appearance of the attacked image stays close to the original. This can be considered an extremely serious threat to the reliability of the hashing scheme. However, the hash values can only be made identical in case no format conversion is applied. If the attacked and original images have to be converted back to a different source format, the resulting Hamming. distances between the original and attacked versions are 0.235 and 0.113, This is in contrast to the previous case when originals and slightly modified versions have been considered. Still, those differences are significantly below the values observed among uncorrelated images (cf. Figure 4).

The demonstrated attack shows that the JPEG2000 PBHash is highly insecure in its original form and requires a significant security improvement to be useful as a reliable authentication hashing scheme.

3. Key-Dependent JPEG2000 PBHash

The concept of secret transform domains has been exploited as a key-dependency scheme to some degree in the area of multimedia security during the last years. Fridrich [28, 29] introduced the concept of DCT-type key-dependent basis functions in order to protect a watermark from hostile attacks. Unnikrishnan and Singh [30] suggest to use secret fractional Fourier domains to encrypt visual data, a technique which was also used to embed watermarks in an unknown domain [31]. The many degrees of freedom available to design a wavelet transform have also been exploited in similar manner for image and video encryption [32, 33] and to secure watermarking copy-protection [34, 35] and authentication [36] schemes.

In recent works [12, 15, 37], we have proposed to use Pollens' orthogonal filter parameterization as a generic key-dependency scheme for wavelet-based visual hash functions. In the case of an authentication hash, this strategy proved to be successful [12, 15] while it did not work out for a CBIR hash [37] due to the high robustness of the original scheme. Since the orthogonal Pollen parameterization does not easily integrate with lifting-based biorthogonal JPEG2000 filters, we propose to use a different strategy in this work, compliant to the JPEG2000 Part 2 compression pipeline. JPEG2000 Part 2 allows to extend JPEG2000 in various ways. One possibility is to employ different wavelet filters as specified in Part 1 of the standard (e.g., user designed filters) and to vary the filters during decomposition, which is discussed to be used as key-dependency scheme in the following subsection.

Using a key-dependent hashing scheme, the advantage of the JPEG2000 PBHash to generate hash strings from already JPEG2000-encoded visual data by simple parsing and concatenation is lost. An image present as JPEG2000 file needs to be JPEG2000-decoded (with the standard filters) into raw pixel data and reencoded into the key-dependent JPEG2000 domain (with the key-dependent filters) for generating the corresponding hash string.

3.1. Wavelet Lifting Parametrization

We use a lifting parameterization of the CDF 9/7 wavelet filter, which is described in [32] based on the work of Zhong, et al. [38], Daubechies and Sweldens [39] as well as Cohen, et al. [40]. The following conditions for the lowpass and highpass filter taps  and

and  are formulated [40] as follows:

are formulated [40] as follows:

A possible transformation of the CDF 9/7 wavelet into lifting steps, as described in [39] looks like

These lifting steps can be used to express the filter taps of  and

and  as functions of the four parameters

as functions of the four parameters  ,

,  ,

,  ,

,  , and a scaling factor

, and a scaling factor  A parameterization which is only dependent on a single parameter

A parameterization which is only dependent on a single parameter  can be derived from these lifting steps together with condition (5) as described in [38]:

can be derived from these lifting steps together with condition (5) as described in [38]:

For  the original CDF 9/7 filter is obtained. The parameterization comes at virtually no additional computational cost, only the functions (7) have to be evaluated, and the lowpass and highpass synthesis filter taps for normalization have to be calculated. For a discussion on the applicability of certain parts of the range of

the original CDF 9/7 filter is obtained. The parameterization comes at virtually no additional computational cost, only the functions (7) have to be evaluated, and the lowpass and highpass synthesis filter taps for normalization have to be calculated. For a discussion on the applicability of certain parts of the range of  and on the resulting keyspace see [32]; here, we restrict the range of admissible

and on the resulting keyspace see [32]; here, we restrict the range of admissible  values to

values to  .

.

We do not only use one single key-dependent wavelet filter in the decomposition. Instead, different key-dependent filters are used at each decomposition level of the wavelet transform and for each decomposition orientation (i.e., horizontal and vertical). These techniques originate from content adaptive image compression [41] and are denoted as "nonstationary" and "inhomogeneous" multiresolution analyses. Consequently, we actually employ  filters during a

filters during a  -level wavelet decomposition—the corresponding

-level wavelet decomposition—the corresponding

's are all generated by a pseudorandom number generator from a single seed denoted as "key." However, in fact all

's are all generated by a pseudorandom number generator from a single seed denoted as "key." However, in fact all

's serve as potential key-material for our key-dependent JPEG2000 PBHash and especially the approximation subband data depends on all

's serve as potential key-material for our key-dependent JPEG2000 PBHash and especially the approximation subband data depends on all

's.

's.

In the following, we investigate the impact of choosing different keys on the resulting hash string, that is, whether the resulting hash is really sufficiently dependent on the key used during JPEG2000 compression. We take an image and generate its hash string with specified settings (i.e., fixed number of bytes extracted from the JPEG2000 bitstream and a certain wavelet decomposition depth)—this procedure is repeated for 100 randomly chosen keys and the Hamming distance among all hash strings is computed. Figure 10 shows the resulting Hamming distance histograms for the images Goldhill and Lena where the hash string is only 16 bytes long and decomposition depth 7 is selected. The first plot in Figure 10 displays the Hamming distances among the hash strings of 100 randomly chosen keys where all corresponding distances of 20 test images are accumulated (this set of images includes Goldhill, Lena, Plane, Mandrill, Barbara, Boats, and several other test images).

It is obvious that the key-dependency scheme works in principle, however, there are several hash strings resulting in distances below  . Especially when compared to the corresponding Hamming distance histogram for entirely different images (see Figure 4 left), the distribution is shifted to the left, is much broader, and exhibits many small values. The situation is much improved when increasing the hash length to 50 bytes as displayed in Figure 11. This corresponds well to our expectations since in the longer hash string more high-frequency coefficient data is included which reflects the differences among different filters much more significantly as compared to the smoothed approximation subband data. The Hamming distance histograms are shown in accumulated manner for the same set of 20 test images as before varying the wavelet decomposition depth during hash generation.

. Especially when compared to the corresponding Hamming distance histogram for entirely different images (see Figure 4 left), the distribution is shifted to the left, is much broader, and exhibits many small values. The situation is much improved when increasing the hash length to 50 bytes as displayed in Figure 11. This corresponds well to our expectations since in the longer hash string more high-frequency coefficient data is included which reflects the differences among different filters much more significantly as compared to the smoothed approximation subband data. The Hamming distance histograms are shown in accumulated manner for the same set of 20 test images as before varying the wavelet decomposition depth during hash generation.

The histograms do hardly contain Hamming distances below 0.2 for all three decomposition depths with this hash length. Increasing the hash length even further to 128 bytes with a decomposition depth 6 as shown in Figure 12 for the Goldhill and Lena images and the set of 20 test images even resolves the undesired effects seen before. Most distance values are clearly above 0.3 and the histograms are clearly unimodal. Still, the distributions of the Hamming distances among different images in Figure 4 are centered better and have a lower variance. As a consequence, we recommend to use a hash length of at least 50 bytes when key-dependency of the resulting hash string is important.

3.2. Properties: Sensitivity and Robustness

Sensitivity is the property of a hashing scheme to detect image alterations—for the JPEG2000 PBHash, high sensitivity means that a low number of packet body bytes are required to detect image manipulations. Robustness on the other hand is the property of a hashing scheme to maintain an identical hash string even under common image processing manipulations like compression—for the JPEG2000 PBHash, high robustness means that a high number of packet body bytes are required to detect such types of manipulations. While sensitivity against intentional image modifications and robustness with respect to image compression has been discussed in detail for the key-independent JPEG2000 PBHash in previous work [17, 18], the impact of the different filters used in the key-dependency scheme on these properties of the hashing scheme is not clear yet. Therefore, we conduct several experiments on these issues.

The first experiment investigates the sensitivity against the modification of the Goldhill image shown in Figure 5. We apply the JPEG2000 PBHash to the original and the modified Goldhill images with the same key, and record the number of bytes required to detect the modification (i.e., starting from the beginning of the two hash strings, the position/number of the first unequal byte is recorded). This procedure is repeated for 100 different random keys and the results for four different decomposition depths and are shown in Figure 13 (only two different decomposition depths are shown in Figures 14 and 15). The solid line represents the value obtained with the key-independent JPEG2000 PBHash while the dots represent 100 key-dependent results. Note that (unrealistically) long hashes with 1000 bytes are used in this experiments in order to be able to capture the corresponding behavior well.

First, it is obvious that, in the plots in Figure 13, sensitivity varies among the different keys employed. Second, there is no clear trend with respect to the sensitivity of the "standard" JPEG2000 filter as compared to the parameterized versions. While for decomposition depths 4 and 5 it seems that most parameterized filters degrade sensitivity (i.e., more bytes are required to detect the modifications), decomposition depths 6 and 8 show improvements but also degradations in sensitivity of the parameterized filters as compared to the standard filter. It has to be noted that the different results for different decomposition depths discussed are specific for the Goldhill image and its modification and depend significantly on the kind and severeness of the modification performed (e.g., for decomposition depth 5, we notice a sensitivity decrease for the Goldhill image; but for the Lena image as shown in Figure 15, we observe both improvements as well as degradations). In fact, it is clear that there are variations and that the "standard" filter is just one out of many other filters with no specific properties with respect to sensitivity.

Figure 14 displays the results for decomposition depths 6 and 8 for the Plane image. While decomposition depth 6 seems to improve sensitivity, for depth 8, we notice improvements as well as degradations as compared to the standard filter.

Similarly, in Figure 15 we both observe improvements as well as degradations with respect to sensitivity for both decomposition depths considered.

The second experiment regarding sensitivity relates the variations caused by the different filters to the type and severeness of the modifications as shown in Figures 5–7. We use the JPEG2000 PBHash with 128 bytes and decomposition depth 6 and compute the Hamming distances between the original and modified images for 200 random keys (identical keys for original and modification are used). Figure 16 shows the corresponding results.

The modification performed on the Plane image is rich in contrast and affects a considerable area in the image. This modification is clearly detected for all keys assuming a detection threshold of 0.15 or lower as displayed by the middle histogram. The modification of the Goldhill image also affects a considerable number of pixels, but the contrast in this area is not changed that much. Therefore, the detection threshold had been set to 0.04 to detect the modification for all filters (which in turn negatively influences robustness of course). Finally, the modification done to Lena image affects only few pixels and hardly changes the contrast in the areas modified. Consequently, for some filter parameters, the modification is not detected at all (i.e., the Hamming distance between the hash strings is 0). Similar to the key-independent JPEG2000 PBHash, sensitivity can be controlled by setting the hash length accordingly. In the key-dependent scheme, the variations among different filters need to be considered additionally which means that longer hash strings as compared to the key-independent scheme should be used to guarantee sufficient sensitivity for all filters. Overall, employing the key-dependent hashing scheme with different filters on the same image (see Figures 10–12) results in larger Hamming distances as compared to using it with the same filters on an original and a slightly modified image (Figure 16).

The second property investigated in this subsection is robustness to common image transformations. As a typical example, we select JPEG2000 compression. We apply the JPEG2000 PBHash to the original and compressed Plane images (bitrate 0.5 bpp) with the same key and record the number of bytes required to detect the modification (i.e., starting from the beginning of the two hash strings, the position/number of the first unequal byte is recorded). This procedure is repeated for 100 different random keys and the results for four different decomposition depths are shown in Figure 17 (only two in Figure 18). The solid line represents the value obtained with the key-independent JPEG2000 PBHash while the dots represent 100 key-dependent results.

Similar to the investigations on sensitivity, we notice varying robustness for the parameterized filters (also concerning the relation to the robustness of the "standard" JPEG2000 filter) and inconsistent results for the different decomposition levels. However, the differences are not as pronounced as in the case of sensitivity and the results are similar for different images.

The second experiment regarding robustness relates the variations caused by the different filters to the target bitrate used for compression and the length of the hash string. We use the JPEG2000 PBHash with 16 and 128 bytes and decomposition depths 7 and 6 and compute the Hamming distances between the original and compressed images for 100 random keys (identical keys for original and compressed are used). Figure 19 shows the corresponding results for target bitrate 0.5 bpp.

At this bitrate, the 16-byte JPEG2000 PBHash provides good robustness for almost all keys (i.e., almost all Hamming distances are 0). The 128-byte hash string on the other hand produces differences up to 0.5 for some keys so that for this setting, compression robustness cannot be provided. Figure 20 shows corresponding results for a target bitrate of 0.05 bpp. At this low rate, even the 16-byte hash generates differences up to 0.5 and the 128-byte hash results in a histogram distribution similar to the case when different images have been used as input.

To summarize, we may conclude that the key-dependency introduced into the JPEG2000 PBHash has undesired effects on sensitivity and robustness. Caused by the varying sensitivity for different filters used in the hashing scheme, the length of the hash string has to be increased as compared to the key-independent scheme to detect even small modifications reliably. For this setting, compression robustness is already hard to achieve for all filters. So, in a way, adding key-dependency to the scheme has to be paid with an aggravation of the tradeoff between sensitivity and robustness of the scheme caused by the varying respective properties of the filters used.

The sensitivity/robustness tradeoff issue has not been discussed in depth in earlier works on key-dependent wavelet transforms [12, 37] in the context of robust hashing. As already mentioned, in the CBIR scenario [37], the high robustness of the feature extraction itself prevents a satisfactory key-dependency of the hash string. In [12], parameterized (Pollen) wavelet filters as well as key-dependent wavelet packet subband structures have been investigated for their usefulness in the context of an authentication hashing scheme. Key-dependency, key-space, and attack resistance have been found to be in sensible ranges, however, the sensitivity/robustness tradeoff has not been investigated explicitly. However, the high variation in the Hamming distances found suggests varying sensitivity as found in this work. In recent work [42], we have investigated key-dependent wavelet packet subband structures as a means to add key-dependency to the JPEG2000 PBHash and found robustness to be significantly reduced as compared to the standard pyramidal subband structure, while sensitivity was found to be almost identical to the standard case. Parameterized lifting as employed in this work is clearly better suited to add key-dependency as compared to key-dependent wavelet packet structures, at least in the case of the JPEG2000 PBHash.

3.3. Attack Resistance

The aim of adding key-dependency to the JPEG2000 PBHash is to prevent the attacks as described is Section 2.3. In case the key-dependent hashing scheme is used, an attacker does not know which key is used to compute the hash string for an image subject to authentication. He can just choose an arbitrary key and perform the attack as described using this key in hash generation (i.e., both the original and the modified images are JPEG2000-compressed using this particular chosen key for the attack and the part of the bitstream required for the hash is interchanged). Now, the attacker hopes that the Hamming distance between the original image and her attacked version will be small also for other keys than the single one used in her attack. Figure 21 shows an attacked version of the modified Lena image and a histogram of Hamming distances between 50-byte hash strings of the original and attacked images when 100 different random keys are used in authentication (the same key is used for both original and attacked versions).

It is clearly visible that only one key results in distance 0 which is the case where the authentication key is identical to the key used for the attack. Two more distances are around 0.2, the rest is between 0.4 and 0.6. We see that the key-dependency scheme enables the JPEG2000 PBHash to identify the attacked image reliably. Figure 22 shows the same effect on the attacked Plane image where all Hamming distances are between 0.4 and 0.6 except for the single filter used in the attack.

Figure 23 verifies for the Plane image that the attack is successfully prevented with the 50-byte hash also for different decomposition depths.

When the hash length is increased to 128 bytes, the attack gets more difficult since a larger share of the bitstream data needs to be exchanged between original and modified versions possibly compromising image quality of the attacked version. Figures 24 and 25 illustrate this case for the Lena and the Goldhill images.

While the image quality of the attacked version might still be sufficient for some applications, the Hamming distance histograms clearly indicate that the attack is prevented also under these settings (in these experiments, the key used for producing the attacked versions is not included in the keys used for authentication).

In Section 2.3, we have also demonstrated the collision attack against the JPEG2000 PBHash where an image has been attacked to produce the same hash as an arbitrary original image. We cover the case of a 16-byte hash since the attacked images shown in Figure 9 hardly meet any quality requirement. In Figure 26, we visualize the attacked Lena image modified to exhibit the hash string of the Plane image. Figure 27 covers the vice versa situation. Again, the attacker has to select an arbitrary key for conducting the attack. All she can do is to hope that the hash string of the attacked image produced by other keys is similar to the string she created in the attack.

The histograms shown in Figures 26 and 27 show that again the attack can be prevented reliably. Most Hamming distances between the attacked image and the original image are >0.2 and actually all are >0.1 The hash string of the attacked image does no longer exhibit a high degree of similarity to the original image in the authentication. The same is of course true with respect to the original version of the attacked image (histograms look similar but are not shown).

The same results can be obtained for the settings corresponding to the images shown in Figure 9; however, since the visual quality of the attacked images is rather low, we do not give the plots here.

3.4. Key-Dependency and Security

Recently, a method for measuring the security of robust image hashing algorithms has been proposed [16]. It is based on unicity distance, a concept pioneered by Shannon [43] in 1949, which states that the amount of uncertainty in an encryption key reduces with each observed clear-text and cipher-text pair. This means for image hashing, that the secret key can be estimated when the key is reused multiple times on different input images. In this case, the unicity distance of a hashing scheme determines how often (i.e., for how many different images) a key can be reused, before it can be uniquely determined.

is the image hash function,

is the image hash function,  the input image,

the input image,  the secret key, and

the secret key, and  the resulting hash vector. When we use the same key

the resulting hash vector. When we use the same key  -times for different input images, we get pairs of images and hash vectors

-times for different input images, we get pairs of images and hash vectors  The conditional entropy of the secret key

The conditional entropy of the secret key  can then be denoted by

can then be denoted by  In general, with the increase of

In general, with the increase of  conditional entropy will decrease. To determine the unicity distance of the image-hashing algorithm, the observed image-hash pairs are taken as the input to a key estimation algorithm. The output of this algorithm (i.e., the estimated secret key) is gradually refined with the increased number of observed image-hash pairs. It is expected that the estimated key gets closer and closer to the actual key

conditional entropy will decrease. To determine the unicity distance of the image-hashing algorithm, the observed image-hash pairs are taken as the input to a key estimation algorithm. The output of this algorithm (i.e., the estimated secret key) is gradually refined with the increased number of observed image-hash pairs. It is expected that the estimated key gets closer and closer to the actual key  , until they can be considered identical. The number of image-hash pairs required to recover the key

, until they can be considered identical. The number of image-hash pairs required to recover the key  is denoted by "unicity distance."

is denoted by "unicity distance."

The iterative search algorithm suggested to estimate key data [16] relies on the assumption that the Hamming distances between hashes derived from similar keys get smaller the more similar the keys get. The sensitivity of the key-dependency scheme towards small changes in the key has therefore major impact on the convergence speed of this algorithm.

To investigate this issue in the context of the key-dependent JPEG2000 PBHash, we list in Table 1 the  's derived from a specific key when decomposition depth 5 is employed. We further assume that 9 out of 10 parameters used for JPEG2000 PBHash generation are already set to the correct value and only one parameter is changed slightly. Table 2 shows the Hamming distances between the hash of length 50 bytes computed with all correct

's derived from a specific key when decomposition depth 5 is employed. We further assume that 9 out of 10 parameters used for JPEG2000 PBHash generation are already set to the correct value and only one parameter is changed slightly. Table 2 shows the Hamming distances between the hash of length 50 bytes computed with all correct  's and a hash determined where a single vertical

's and a hash determined where a single vertical  is slightly incorrect (the value used as compared to Table 1 is given in the table, Hamming distances for 10 images are given).

is slightly incorrect (the value used as compared to Table 1 is given in the table, Hamming distances for 10 images are given).

.

. images after varying one parameter.

images after varying one parameter.We observe that when the resolution level-1 vertical  is slightly incorrect, all hash values still show significant Hamming differences. For incorrect level-2 and level-3

is slightly incorrect, all hash values still show significant Hamming differences. For incorrect level-2 and level-3  's, some images exhibit 0 Hamming distance (e.g., 3 out of 10 at level three), others show large distances. Only at level 4 (and level 5) all images show consistently a 0 difference when all other parameters are known exactly. Note that these observations have been made under the assumption that 9 out of 10

's, some images exhibit 0 Hamming distance (e.g., 3 out of 10 at level three), others show large distances. Only at level 4 (and level 5) all images show consistently a 0 difference when all other parameters are known exactly. Note that these observations have been made under the assumption that 9 out of 10  's are already correct without arguing how this could be achieved in an actual key-estimation algorithm.

's are already correct without arguing how this could be achieved in an actual key-estimation algorithm.

In order to investigate the sensitivity with respect to small key changes in more detail, we determine the Hamming distances for varying the vertical parameter of each of the five resolution levels within the interval  using a step size of

using a step size of  . This leads to

. This leads to  Hamming distances for each resolution level. Figures 28 and 29 show the results obtained for the Lena image. When varying level-1

Hamming distances for each resolution level. Figures 28 and 29 show the results obtained for the Lena image. When varying level-1  , we note that the Hamming distance gets 0 for an extremely small range only. Also for the level-2 and level-3

, we note that the Hamming distance gets 0 for an extremely small range only. Also for the level-2 and level-3  's, only a small interval around the correct

's, only a small interval around the correct  leads to a 0 Hamming distance (i.e.,

leads to a 0 Hamming distance (i.e.,  . Only for level 4 (and level 5—not shown) the entire range investigated leads to a 0 Hamming distance.

. Only for level 4 (and level 5—not shown) the entire range investigated leads to a 0 Hamming distance.

The assumption made so far to determine all but one  correctly is already difficult to satisfy. Considering this fact and the phenomenon that the iterative key search procedure has even problems to achieve convergence with all but one correct

correctly is already difficult to satisfy. Considering this fact and the phenomenon that the iterative key search procedure has even problems to achieve convergence with all but one correct  at least in case the level-1

at least in case the level-1  is not yet correct makes us believe that unicity distance will be rather large for the key-dependent JPEG2000 PBHash. In fact, the assumption that the Hamming distances between hashes derived from similar keys get smaller the more similar the keys get does only hold in very small neighborhoods. Therefore, instead of an iterative key estimation technique based on successive refinement, the only way to obtain the correct key would involve a rather costly random search through a significant share of the keyspace until a configuration with small Hamming distance is found which can be systematically improved.

is not yet correct makes us believe that unicity distance will be rather large for the key-dependent JPEG2000 PBHash. In fact, the assumption that the Hamming distances between hashes derived from similar keys get smaller the more similar the keys get does only hold in very small neighborhoods. Therefore, instead of an iterative key estimation technique based on successive refinement, the only way to obtain the correct key would involve a rather costly random search through a significant share of the keyspace until a configuration with small Hamming distance is found which can be systematically improved.

Consequently, we estimate the key-dependent JPEG2000 PBHash to have a rather large unicity distance.

4. Conclusion and Future Work

Key-dependency is added to a JPEG2000 packet data-based hashing scheme by means of employing a parameterized lifting scheme in the wavelet decomposition stage. Attacks demonstrated against the scheme without key-dependency can be prevented effectively in this manner. Also the security of the scheme in terms of unicity distance is assumed to be high. However, key-dependency comes at a certain cost for this scheme: due to reduced sensitivity of some potentially employed filters, the hash length has to be increased as compared to the scheme without key-dependency. This leads to reduced robustness on the other hand.

In future work, we will investigate possibilities how to add key-dependency to the JPEG2000 PBHash without affecting sensitivity too much: while we have found significant variations in sensitivity among the different decompositions and filters employed, it is not yet clear if it is possible to identify subsets of the range for  where these variations could be bounded. An alternative approach is to investigate different types of key-dependency for wavelet transforms like isotropic or anisotropic wavelet packets. Additionally, we will estimate the magnitude of the keyspace available (focusing on decomposition level-dependent discretization of the

where these variations could be bounded. An alternative approach is to investigate different types of key-dependency for wavelet transforms like isotropic or anisotropic wavelet packets. Additionally, we will estimate the magnitude of the keyspace available (focusing on decomposition level-dependent discretization of the  range), and we will determine the sensitivity against key modifications for the scheme in more detail to provide an approximation for an actual unicity distance value. In particular, we will investigate possibilities how to make the key-estimation procedure separable, that is, conduct key estimation for each decomposition level separately.

range), and we will determine the sensitivity against key modifications for the scheme in more detail to provide an approximation for an actual unicity distance value. In particular, we will investigate possibilities how to make the key-estimation procedure separable, that is, conduct key estimation for each decomposition level separately.

References

Fridrich J: Visual hash for oblivious watermarking. In Security and Watermarking of Multimedia Contents II, January 2000, San Jose, Calif, USA, Proceedings of SPIE Edited by: Wong PW, Delp EJ III. 3971: 286-294.

Fridrich J, Goljan M: Robust hash functions for digital watermarking. Proceedings of IEEE International Conference on Information Technology: Coding and Computing,, March 2000, Las Vegas, Nev, USA 178-183.

Lu C-S, Liao H-YM: Structural digital signature for image authentication: an incidental distortion resistant scheme. Proceedings of the ACM Workshops on Multimedia, October-November 2000, Los Angeles, Calif, USA 115-118.

Monga V, Mihçak MK: Robust image hashing via non-negative matrix factorizations. Proceedings of IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP '06), May 2006, Toulouse, France 2: 225-228.

Monga V, Banerjee A, Evans BL: A clustering based approach to perceptual image hashing. IEEE Transactions on Information Forensics and Security 2006,1(1):68-79. 10.1109/TIFS.2005.863502

Radhakrishnan R, Xiong Z, Memon ND: Security of the visual hash function. In Security and Watermarking of Multimedia Contents V, January 2003, Santa Clara, Calif, USA, Proceedings of SPIE Edited by: Delp EJ III, Wong PW. 5020: 644-652.

Skrepth CJ, Uhl A: Robust hash functions for visual data: an experimental comparison. In Proceedings of the 1st Iberian Conference on Pattern Recognition and Image Analysis (IbPRIA '03), June 2003, Mallorca, Spain, Lecture Notes in Computer Science. Volume 2652. Edited by: Perales López FJ, Campilho AC, de la Blanca NP, Sanfeliu A. Springer; 986-993.

Swaminathan A, Mao Y, Wu M: Robust and secure image hashing. IEEE Transactions on Information Forensics and Security 2006,1(2):215-230. 10.1109/TIFS.2006.873601

Venkatesan R, Koon S-M, Jakubowski MH, Moulin P: Robust image hashing. Proceedings of the International Conference on Image Processing (ICIP '00), September 2000, Vancouver, BC, Canada 3: 664-666.

Mihçak MK, Venkatesan R: New iterative geometric methods for robust perceptual image hashing. Proceedings of the Workshop on Security and Privacy in Digital Rights Management, November 2001, Philadelphia, Pa, USA 2320: 13-21.

Swaminathan A, Mao Y, Wu M: Security of feature extraction in image hashing. Proceedings of IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP '05), March 2005, Philadelphia, Pa, USA 2: 1041-1044.

Meixner A, Uhl A: Security enhancement of visual hashes through key dependent wavelet transformations. In Proceedings of the 13th International Conference on Image Analysis and Processing (ICIAP '05), September 2005, Cagliari, Italy, Lecture Notes in Computer Science. Volume 3617. Edited by: Roli F, Vitulano S. Springer; 543-550.

Özer H, Sankur B, Memon N, Anarim E: Perceptual audio hashing functions. EURASIP Journal on Applied Signal Processing 2005,2005(12):1780-1793. 10.1155/ASP.2005.1780

Monga V, Evans BL: Perceptual image hashing via feature points: performance evaluation and tradeoffs. IEEE Transactions on Image Processing 2006,15(11):3452-3465.

Meixner A, Uhl A: Analysis of a wavelet-based robust hash algorithm. In Security, Steganography, and Watermaking of Multimedia Contents VI, January 2004, San Jose, Calif, USA, Proceedings of SPIE Edited by: Delp EJ III, Wong PW. 5306: 772-783.

Mao Y, Wu M: Unicity distance of robust image hashing. IEEE Transactions on Information Forensics and Security 2007,2(3):462-467.

Norcen R, Uhl A: Robust authentication of the JPEG2000 bitstream. Proceedings of the 6th IEEE Nordic Signal Processing Symposium (NORSIG '04), June 2004, Espoo, Finland 121-124.

Norcen R, Uhl A: Robust visual hashing using JPEG2000. In Proceedings of the 8th IFIP TC6/TC11 Conference on Communications and Multimedia Security (CMS '04), September 2004, Lake Windermere, UK. Edited by: Chadwick D, Preneel B. Springer; 223-236.

Taubman D, Marcellin MW: JPEG2000: Image Compression Fundamentals, Standards and Practice. Kluwer Academic Publishers, Dordrecht, The Netherlands; 2002.

Grosbois R, Gerbelot P, Ebrahimi T: Authentication and access control in the JPEG2000 compressed domain. Applications for Digital Image Processing XXIV, July 2001, San Diego, Calif, USA, Proceedings of SPIE 4472: 95-104.

Apostolopoulos J, Wee S, Dufaux F, Ebrahimi T, Sun Q, Zhang Z: The emerging JPEG2000 security (JPSEC) standard. Proceedings of IEEE International Symposium on Circuits and Systems (ISCAS '06), May 2006, Island of Kos, Greece 3882-3885.

Peng C, Deng RH, Wu Y, Shao W: A flexible and scalable authentication scheme for JPEG2000 image codestreams. Proceedings of the 11th ACM International Conference on Multimedia (MULTIMEDIA '03), November 2003, Berkeley, Calif, USA 433-441.

Tabesh A, Bilgin A, Krishnan K, Marcellin MW: JPEG2000 and motion JPEG2000 content analysis using codestream length information. Proceedings of the Data Compression Conference (DCC '05), March 2005, Snowbird, Utah, USA 329-337.

Descampe A, Vandergheynst P, De Vleeschouwer C, Macq B: Coarse-to-fine textures retrieval in the JPEG2000 compressed domain for fast browsing of large image databases. In Proceedings of the International Workshop on Multimedia Content Representation, Classification and Security (MRCS '06), September 2006, Istanbul, Turkey, Lecture Notes in Computer Science. Volume 4105. Edited by: Günsel B, Jain AK, Tekalp AM, Sankur B. Springer; 282-289.

Liu C, Mandal M: Fast image indexing based on JPEG2000 packet header. Proceedings of the ACM Workshops on Multimedia: Multimedia Information Retrieval, October 2001, Ottawa, Ontario, Canada 46-49.

Mandal MK, Liu C: Efficient image indexing techniques in the JPEG2000 domain. Journal of Electronic Imaging 2004,13(1):182-190. 10.1117/1.1633286

Petitcolas FAP, Steinebach M, Raynal F, Dittmann J, Fontaine C, Fatès N: Public automated web-based evaluation service for watermarking schemes: stirMark benchmark. Security and Watermarking of Multimedia Contents III, January 2001, San Jose, Calif, USA, Proceedings of SPIE 4314: 575-584.

Fridrich J, Baldoza AC, Simard RJ: Robust digital watermarking based on key-dependent basis functions. In Proceedings of the 2nd International Workshop on Information Hiding (IH '98), April 1998, Portland, Ore, USA, Lecture Notes in Computer Science. Volume 1525. Edited by: Aucsmith D. Springer; 143-157.

Fridrich J: Key-dependent random image transforms and their applications in image watermarking. Proceedings of the International Conference on Imaging Science, Systems, and Technology (CISST '99), June 1999, Las Vegas, Nev, USA 237-243.

Unnikrishnan G, Singh K: Double random fractional Fourier-domain encoding for optical security. Optical Engineering 2000,39(11):2853-2859. 10.1117/1.1313498

Djurovic I, Stankovic S, Pitas I: Digital watermarking in the fractional Fourier transformation domain. Journal of Network and Computer Applications 2001,24(2):167-173. 10.1006/jnca.2000.0128

Engel D, Uhl A: Parameterized biorthogonal wavelet lifting for lightweight JPEG2000 transparent encryption. Proceedings of the 7th Workshop on Multimedia and Security (MM-SEC '05), August 2005, New York, NY, USA 63-70.

Pommer A, Uhl A: Selective encryption of wavelet-packet encoded image data: efficiency and security. Multimedia Systems 2003,9(3):279-287. 10.1007/s00530-003-0099-y

Dietl WM, Meerwald P, Uhl A: Protection of wavelet-based watermarking systems using filter parametrization. Signal Processing 2003,83(10):2095-2116. 10.1016/S0165-1684(03)00170-1

Dietl WM, Uhl A: Robustness against unauthorized watermark removal attacks via key-dependent wavelet packet subband structures. Proceedings of IEEE International Conference on Multimedia and Expo (ICME '04), June 2004, Taipei, Taiwan 3: 2043-2046.

Huang J, Hu J, Huang D, Shi YQ: Improve security of fragile watermarking via parameterized wavelet. Proceedings of the International Conference on Image Processing (ICIP '04), October 2004, Singapore 2: 721-724.

Meixner A, Uhl A: Robustness and security of a wavelet-based CBIR hashing algorithm. Proceeding of the 8th Workshop on Multimedia and Security (MM-Sec '06), September 2006, Geneva, Switzerland 140-145.

Zhong G, Cheng L, Chen H: A simple 9/7-tap wavelet filter based on lifting scheme. Proceedings of the International Conference on Image Processing (ICIP '01), October 2001, Thessaloniki, Greece 2: 249-252.

Daubechies I, Sweldens W: Factoring wavelet transforms into lifting steps. Journal of Fourier Analysis and Applications 1998,4(3):245-267.

Cohen A, Daubechies I, Feauveau J-C: Biorthogonal bases of compactly supported wavelets. Communications on Pure and Applied Mathematics 1992,45(5):485-560. 10.1002/cpa.3160450502

Uhl A: Image compression using non-stationary and inhomogeneous multiresolution analyses. Image and Vision Computing 1996,14(5):365-371.

Laimer G, Uhl A: Improving security of JPEG2000-based robust hashing using key-dependent wavelet packet subband structures. In Proceedings of the 7th WSEAS International Conference on Wavelet Analysis & Multirate Systems (WAMUS '07), October 2007, Arcachon, France Edited by: Dondon P, Mladenov V, Impedovo S, Cepisca S. 127-132.

Shannon CE: Communication theory of secrecy systems. Bell System Technical Journal 1949,28(4):656-715.

Acknowledgments

This work has been partially supported by the Austrian Science Fund, Project no. 15170 and by the European Commission through the IST Programme under Contract IST-2002-507932 ECRYPT. The use of Dominik Engel's lifting parameterization implementation is gratefully acknowledged.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 2.0 International License (https://creativecommons.org/licenses/by/2.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

About this article

Cite this article

Laimer, G., Uhl, A. Key-Dependent JPEG2000-Based Robust Hashing for Secure Image Authentication. EURASIP J. on Info. Security 2008, 895174 (2008). https://doi.org/10.1155/2008/895174

Received:

Accepted:

Published:

DOI: https://doi.org/10.1155/2008/895174

and

and  :

:  holds

holds

and

and  holds

holds

and

and  holds

holds

, it is almost impossible to find a visually different image

, it is almost impossible to find a visually different image  with

with  (or even

(or even  ). In other words, it should be impossible to create a forgery which results in the same hash value as the original image.

). In other words, it should be impossible to create a forgery which results in the same hash value as the original image.